PREMIS/METS for scalability

Main Page > Development > Development documentation > Metadata elements > PREMIS/METS for scalability

The scalability issue

SIPs with very large numbers of files (10,000 or more) tend to create very large METS files. This can cause workflow failures and problems with indexing, storing and parsing the metadata. The issue is how to reduce the verbosity of the PREMIS and METS elements without removing information required for preservation.

Approaches to the problem

Currently, a standard Archivematica METS file is based entirely on the description of PREMIS Files. Each File has its own METS amdSec, containing the following: a techMD; multiple Events, each in its own digiprovMD; and three Agents, each in its own digiprovMD. This means that a single File referenced in the METS fileSec has one linked amdSec.

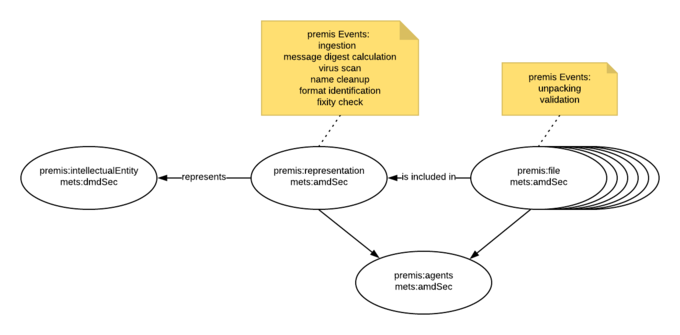

We propose using a premis Representation as a level at which to capture information about Events that are common to all Files, and a single amdSec with premis Agents for the entire AIP. This is more feasbile with the introduction of PREMIS 3.0, which provides a means for creating an Intellectual Entity and one or more Representations of that Entity. This is a diagram depicting the proposed approach:

We’ve mocked up a PREMIS/METS file ("METS_reduced.xml”). It includes the following changes:

Update from PREMIS 2.2 to 3.0

- Create Intellectual Entity and Representation for AIP. Use premis:relationship to link Representation to Intellectual Entity and Files to Representation.

- Move ingestion, message digest calculation, virus scan, name cleanup, format identification and fixity check Events from File to Representation. For eventOutcomeDetailNote, values are eg “13 files ingested”, “13 message digests calculated” etc. For results with variations, the xml could look like this:

<premis:eventOutcomeInformation>

<premis:eventOutcome>positive</premis:eventOutcome>

<premis:eventOutcomeDetail>

<premis:eventOutcomeDetailNote>11 file formats identified</premis:eventOutcomeDetailNote>

</premis:eventOutcomeDetail>

</premis:eventOutcomeInformation>

<premis:eventOutcomeInformation>

<premis:eventOutcome>tentative</premis:eventOutcome>

<premis:eventOutcomeDetail>

<premis:eventOutcomeDetailNote>2 file formats identified</premis:eventOutcomeDetailNote>

</premis:eventOutcomeDetail>

</premis:eventOutcomeInformation>

- Make dateTime a range for aggregate Events. For example:

<premis:eventDateTime>2018-01-22T22:30:12+00:00/2018-01-22T22:36:07+00:00</premis:eventDateTime>

- Add messageDigestOriginator to techMD for each file. For example:

<premis:messageDigestOriginator>program="python"; module="hashlib.sha256()"</premis:messageDigestOriginator>

Add formatNotes to techMD for each File. For example:

<premis:formatNote>program="Siegfried"; version="1.7.3"</premis:formatNote> <premis:formatNote>positive identification</premis:formatNote>

- Remove Agents from the amdSec for each File. Create a single amdSec for Agents, and add a reference to the Agents amdSec as an ADMID for each File to the fileSec. For example:

<mets:file GROUPID="Group-99e7a918-67e9-4717-924f-6cfd75be3b20" ID="file-99e7a918-67e9-4717-924f-6cfd75be3b20" ADMID="amdSec_2 amdSec_14">. In this case, amdSec_2 is the amdSec for the file, and amdSec_14 is the PREMIS Agents.

- Remove objectCharacteristicsExtension from techMD for all Files.

Result in sample METS file: 5,793 lines reduced to 1,196 - just over 20% of the size of the original.

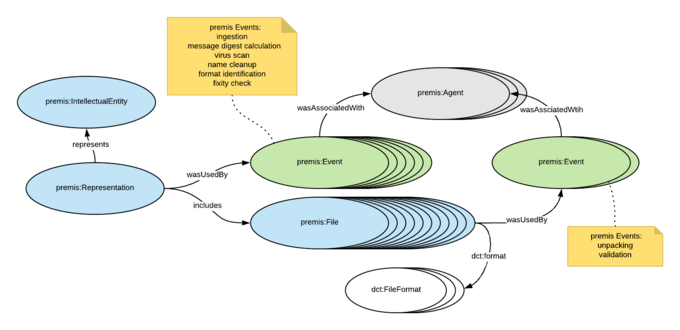

To test further reductions in verbosity, we tried serializing the METS file to RDF using the new draft PREMIS OWL ontology (see https://github.com/PREMIS-OWL-Revision-Team/revise-premis-owl/blob/master/premis3.owl). The diagram "RDF.png" shows how the PREMIS entities are expressed as classes, and the relationships between the various entities. The result was that the number of lines was reduced again, to 365 - about 6.5% the size of the original METS file.

Issues We need to consider very carefully how to handle Events where there are variable outputs. For example, ingestion is fine to move to the Representation since all files get ingested, but name cleanup and validation only apply to certain files, and virus scan can have variable results (passed, failed, not scanned etc.). I'm not sure how to capture empty directories. We are currently working on another project which involves describing empty directories as Intellectual Entities but more analysis is needed to determine how they would fit into the revised structure being proposed here, as the top level (i.e. the dataset) is already considered an Intellectual Entity. What should we do with the tools outputs currently placed in objectCharacteristicsExtension in the METS file? They're very verbose which is why I removed them from the samples, but there is a small amount of important information in them. Unfortunately, the tools don't tend to output to standardized metadata schemas; for example, Exiftool just outputs to raw XML. We could use the FPR to allow users to set rules for tool outputs and schema mappings, but we'll need to have default rules for the majority of Archivematica users who wouldn't want to work at that level of detail with the FPR. We could also choose to capture the tool outputs in log files, but we have to consider the implications of that for searching and reporting.