Dataverse

Main Page > Documentation > Requirements > Dataverse

This page tracks development of a proof of concept integration of Archivematica with Dataverse.

See also

Overview

This wiki captures requirements for ingesting studies (datasets) from Dataverse into Archivematica for long-term preservation.

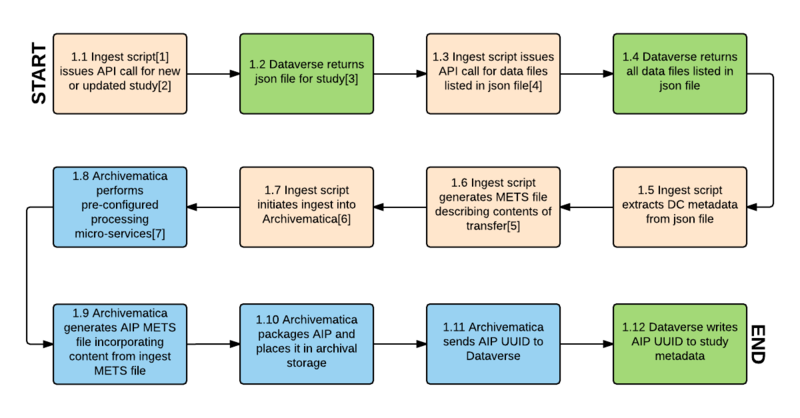

Workflow

- The proposed workflow consists of issuing API calls to Dataverse, receiving content (data files and metadata) for ingest into Archivematica, preparing standard Archivematica Archival Information Packages (AIPs) and placing them in archival storage, and updating the Dataverse study with the AIP UUIDs.

- Analysis is based on Dataverse tests using https://apitest.dataverse.org/ and https://dataverse-demo.iq.harvard.edu/, online documentation at http://guides.dataverse.org/en/latest/api/index.html and discussions with Dataverse developers and users.

- Proposed integration is for Archivematica 1.5 and higher and Dataverse 4.x.

Workflow diagram

Workflow diagram notes

[1] "Ingest script" refers to an automation tool designed to automate ingest into Archivematica for bulk processing. An existing automation tool would be modified to accomplish the tasks described in the workflow.

[2] A new or updated study is one that has been published, either for the first time or as a new version, since the last API call.

[3] The json file contains citation and other study-level metadata, an entity_id field that is used to identify the study in Dataverse, version information, a list of data files with their own entity_id values, and md5 checksums for each data file.

[4] If json file has content_type of tab separated values, Archivematica issues API call for multiple file ("bundled") content download. This returns a zipped package for tsv files containing the .tab file, the original uploaded file, several other derivative formats, a DDI XML file and file citations in Endnote and RIS formats.

[5] The METS file will consist of a dmdSec containing the DC elements extracted from the json file, and a fileSec and structMap indicating the relationships between the files in the transfer (eg. original uploaded data file, derivative files generated for tabular data, metadata/citation files). This will allow Archivematica to apply appropriate preservation micro-services to different filetypes and provide an accurate representation of the study in the AIP METS file (step 1.9).

[6] Archivematica ingests all content returned from Dataverse, including the json file, plus the METS file generated in step 1.6.

[7] Standard and pre-configured micro-services include: assign UUID, verify checksums, generate checksums, extract packages, scan for viruses, clean up filenames, identify formats, validate formats, extract metadata and normalize for preservation.

Transfer METS file

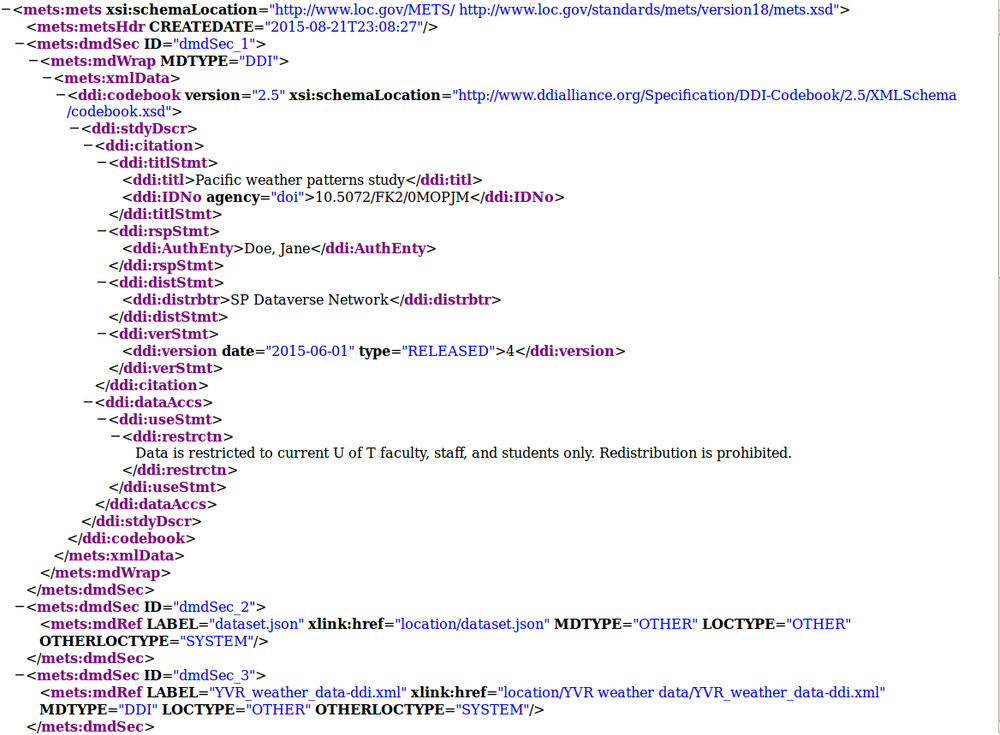

When the ingest script retrieves content from Dataverse, it generates a METS file to allow Archivematica to understand the contents of the transfer and the relationships between its various data and metadata files.

Sample transfer METS file

Original Dataverse study retrieved through API call:

- dataset.json (a JSON file generated by Dataverse consisting of study-level metadata and information about data files)

- Study_info.pdf (a non-tabular data file)

- A zipped bundle consisting of the following:

- YVR_weather_data.sav (an SPSS SAV file uploaded by the researcher)

- YVR_weather_data.tab (a TAB file generated from the SPSS SAV file by Dataverse)

- YVR weather_data.RData (an R file generated from the SPSS SAV file by Dataverse)

- YVR_weather_data-ddi.xml, YVR_weather_datacitation-endnote.xml, and YVR_weather_datacitation-ris.ris (three metadata files generated for the TAB file by Dataverse)

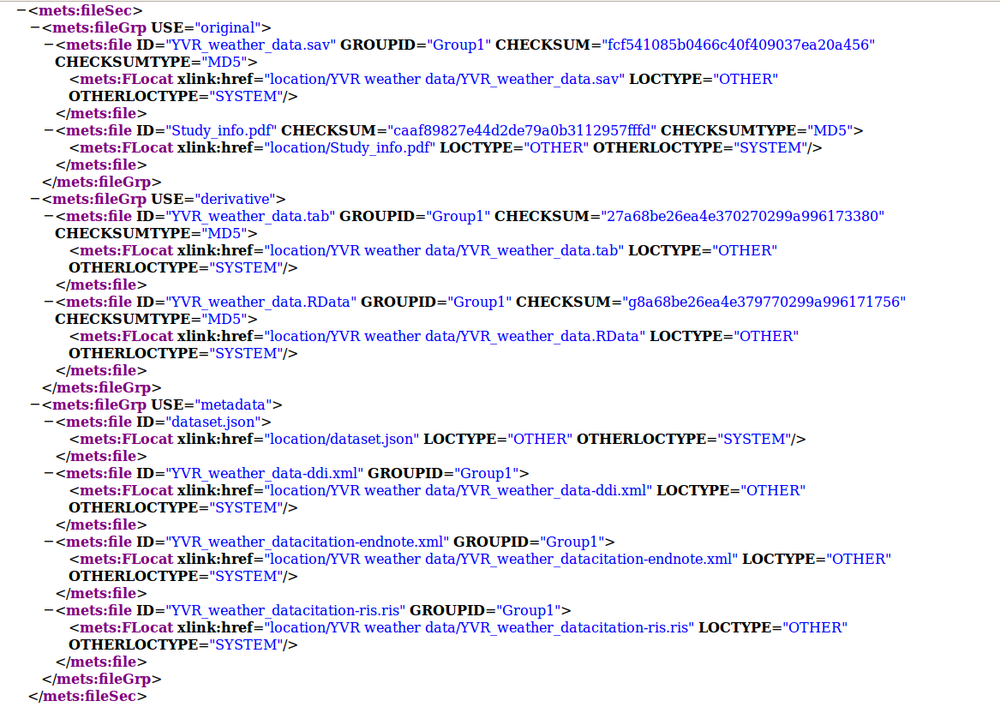

Resulting transfer METS file

- The fileSec in the METS file consists of three file groups, USE="original" (the PDF and SAV files); USE="derivative" (the TAB and R files); and USE="metadata" (the JSON file and the three metadata files from the zipped bundle).

- All of the files unpacked from the Dataverse bundle have a GROUPID attribute to indicate the relationship between them. If the transfer had consisted of more than one bundle, each set of unpacked files would have its own GROUPID.

- Three dmdSecs have been generated:

- dmdSec_1, consisting of a small number of study-level DC terms

- dmdSec_2, consisting of an mdRef to the JSON file

- dmdSec_3, consisting of an mdRef to the DDI XML file

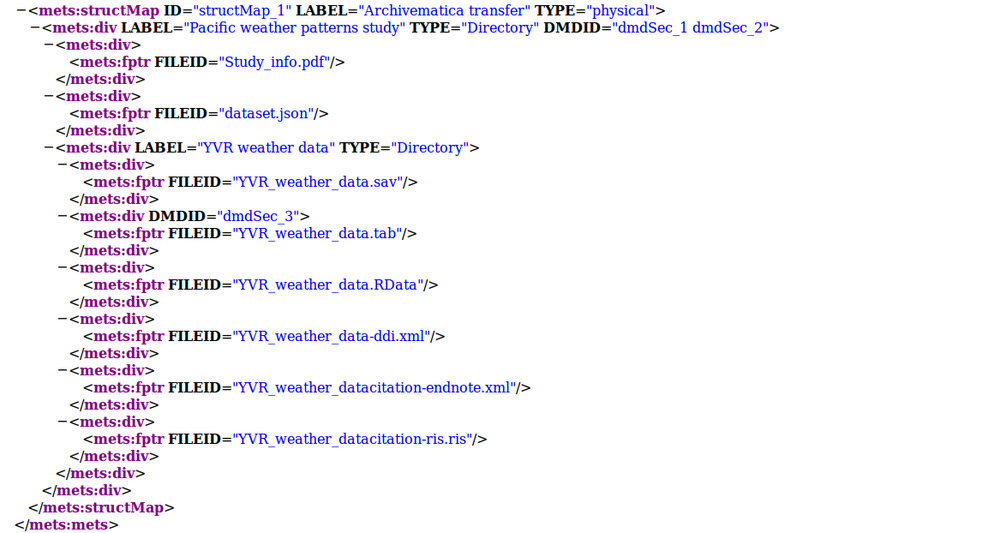

- In the structMap, dmdSec_1 and dmdSec_2 are linked to the study as a whole, while dmdSec_3 is linked to the TAB file. The endnote and ris files have not been made into dmdSecs because they contain small subsets of metadata which are already captured in dmdSec_1 and the DDI xml file.